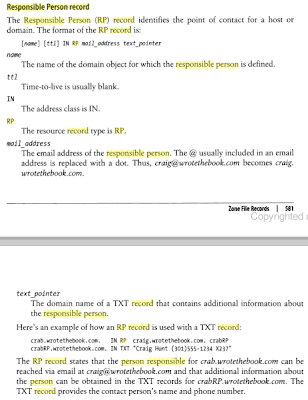

Using Responsible Person Records for Asset Management

Today while spending some time at the book store with my family, I decided to peruse a copy Craig Hunt's TCP/IP Network Administration . It covers BIND software for DNS. I've been thinking about my post Asset Management Assistance via Custom DNS Records . In the book I noticed the following: "Responsible Person" record? That sounds perfect. I found RFC 1183 from 1990 introduced these. I decided to try setting up these records on a VM running FreeBSD 7.1 and BIND 9. The VM had IP 172.16.99.130 with gateway 172.16.99.2. I followed the example in Building a Server with FreeBSD 7 . First I made changes to named.conf as shown in this diff: # diff /var/named/etc/namedb/named.conf /var/named/etc/namedb/named.conf.orig 132c132 --- > zone "16.172.in-addr.arpa" { type master; file "master/empty.db"; }; 274,290d273 To generate the last section I ran the following: # rndc-confgen -a wrote key file "/etc/namedb/rndc.key" # cat rndc.key >...